Scaling Content Audits with an AI-Augmented Workflow

As part of an AI leadership capstone project, I designed and implemented an AI-augmented content audit workflow, transforming a highly manual, time-intensive process into a scalable system that enables faster analysis, broader coverage, and more consistent insights.

90% reduction in audit time | Analyzed ~971 topics (~395K words) in hours vs. weeks | ~80% accuracy across audit criteria

Manual Audit

6-11 days

~971 topics reviewed manually

AI Audit

2-3 hours

Same dataset analyzed

10x faster

My Role

I led the end-to-end design and implementation of the AI-augmented workflow, including:

Task selection and workflow design.

GenAI tool evaluation and selection.

Prompt engineering and system design.

Validation, analysis, and adoption planning.

The Challenge

Content audits are critical for maintaining quality, consistency, and findability, but are rarely performed at scale due to the effort required.

For a large technical documentation set:

Sample content set reviewed: ~971 topics and ~395,000 words.

Manual audit of this size would take an estimated 6–11 full workdays.

As a result, audits were infrequent, limiting opportunities to improve content quality.

The core challenge was not defining audit criteria, but making the process feasible at scale.

My Approach

2. Evaluated and selected the right GenAI tool

I compared general-purpose and specialized tools across accuracy, reliability, and compatibility.

Key insight:

General-purpose tools outperformed specialized tools for this use case.

Selected Claude due to its ability to analyze multiple documents and identify patterns across content sets.

1. Identified high-impact opportunity for AI augmentation

I evaluated multiple content tasks and selected content audit due to:

High time investment.

Strategic importance to content quality and design.

Strong alignment with GenAI capabilities (pattern recognition, categorization, summarization).

4. Developed effective prompt strategies

Prompt design was critical to system performance. I iterated on:

Combined prompts to reduce context loss and improve consistency.

Conversational prompting to validate assumptions and refine outputs.

Structured inputs (custom table of contents file) to resolve hierarchy challenges.

This significantly improved the accuracy and reliability of results.

Audit Prompt

Help me complete an audit of the content in the attached PDF file.

> Part 1 Each set of parent and child topics has a separate TOC and since it is too resource-intensive to crawl each one, I have attached a document that lists the complete set of TOC entries for each parent URL.

Document Format:

=== SECTION START === URL: https://docs.xxx.com/.../nfccyy/toc.htm

Section ID: nfccyy Section Title: New Feature Announcements

TOC: - New Feature Announcements - February 2026 - January 2026

=== SECTION END ===Use this document to create the audit spreadsheet by completing these steps:

> Place the title of each "topic" in a separate row in an Excel spreadsheet.

> Indicate each topic's level in the hierarchy using breadcrumbs that show the path to the topic.

> Summarize the first paragraph of text that appears below the title. When there isn't text following the title (some topics start with tables or notes or have no text at all) indicate that this is the case.

> Place the output of the next 2 steps on the same tab in the spreadsheet as part 1. Generate key findings based on your analysis and place these on a separate tab in the spreadsheet.

> Analyze the contents to determine whether the topic title and the first paragraph (meta description) accurately represents the contents of each topic.

> Analyze whether the format of each title matches the DITA topic type (topic, concept, task, reference) and whether the topic contents is a mix of topic types. Include the analysis in the spreadsheet. Typically topics are overview or title-only topics. Concepts describe how something works and when or why to use it. Tasks include numbered steps. Reference is a list of facts or tables.

Any suggestions or questions before we start?3. Designed an AI-augmented audit workflow

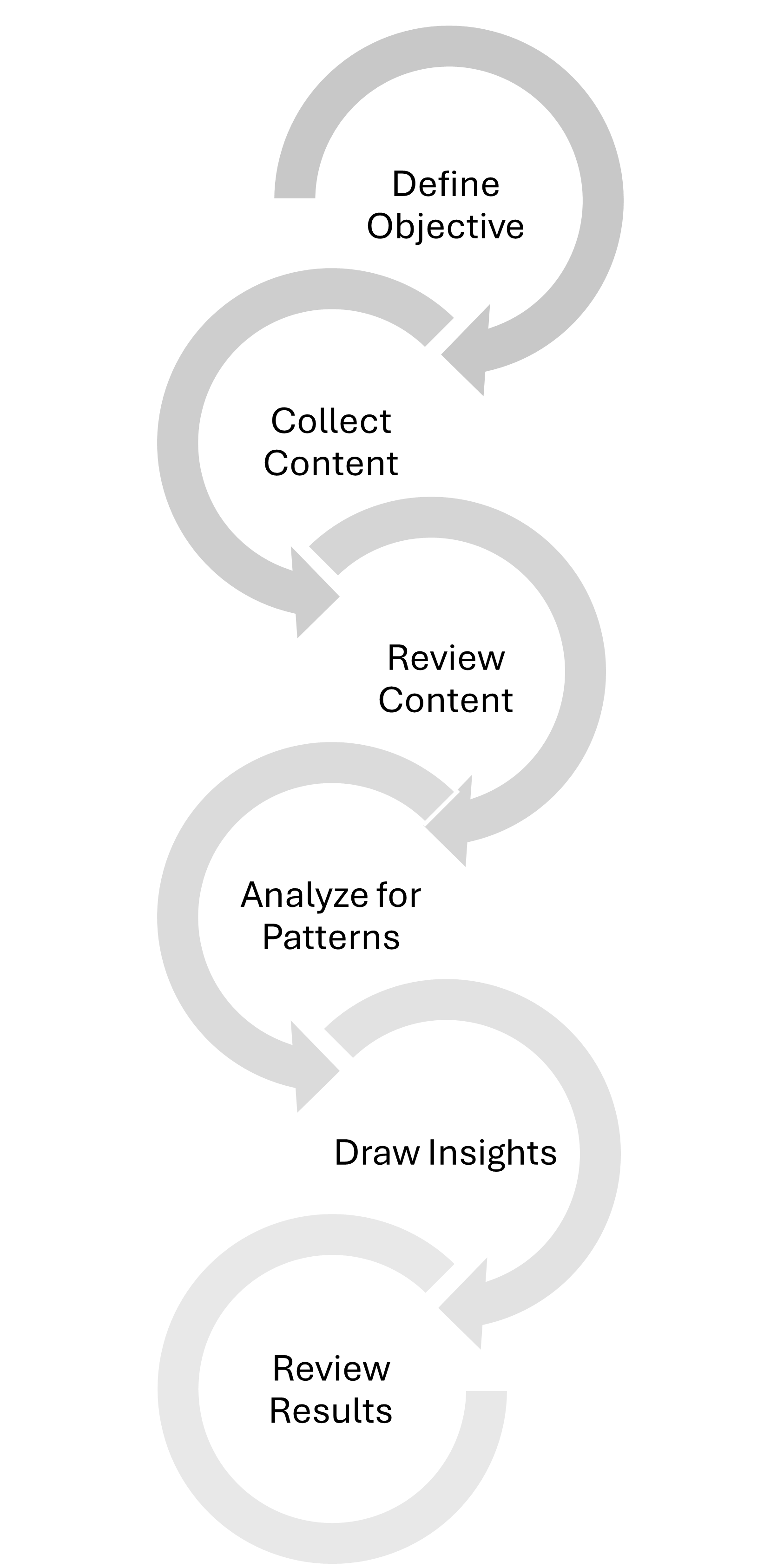

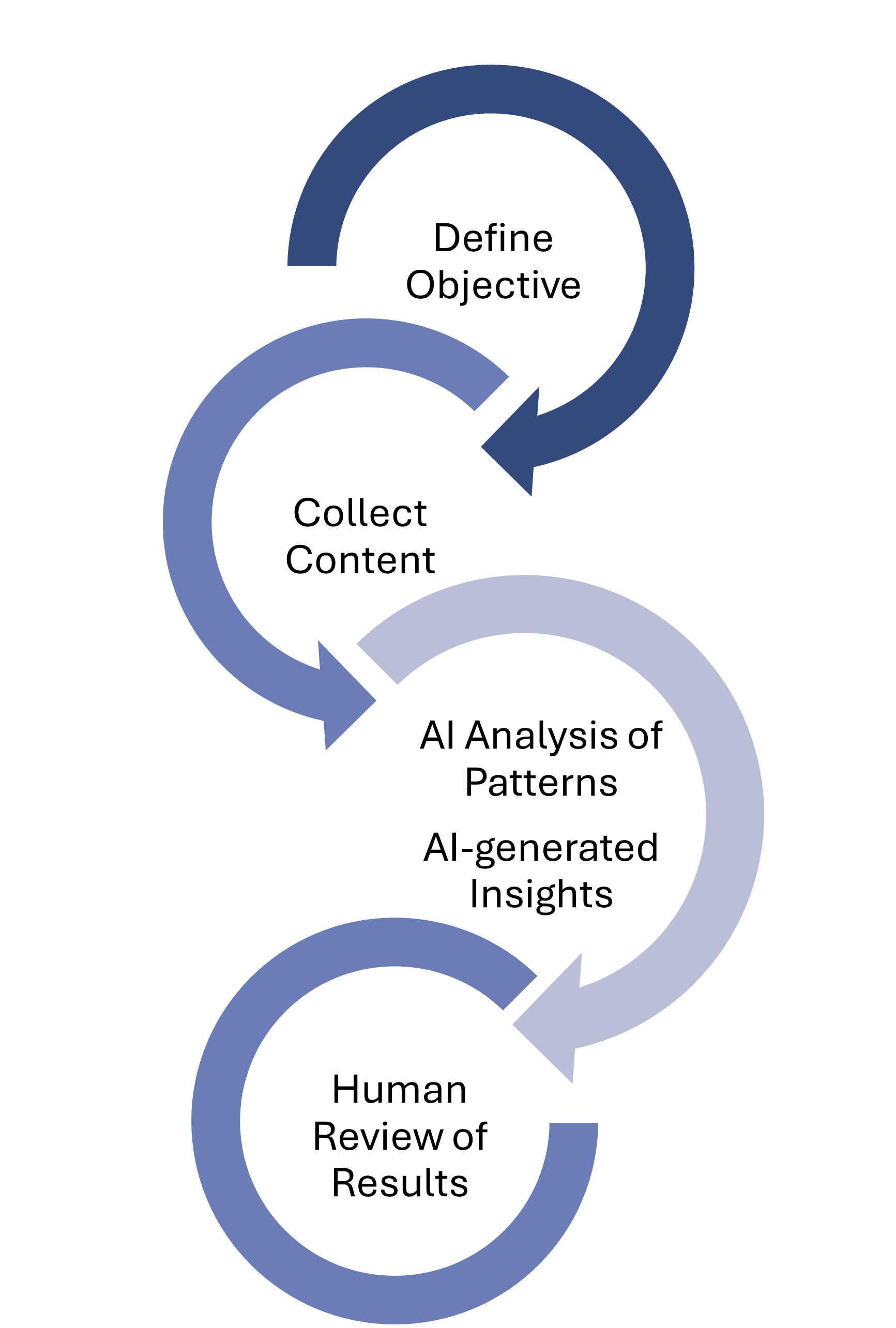

I restructured the traditional audit process into an AI-supported system:

Automated creation of the audit dataset (titles, hierarchy, descriptions).

Applied AI to evaluate sample audit criteria:

Title and metadata accuracy.

Alignment with content types (DITA).

Consolidated outputs into a structured spreadsheet for analysis.

This preserved the analytical rigor of the audit while removing the most manual steps.

Traditional Audit Process

AI-Augmented Audit Process

5. Solved structural challenges in content inputs

The biggest barrier was not the AI; it was the content itself.

I addressed:

Inconsistent formatting across source files (PDF, Word, HTML).

Difficulty reconstructing topic hierarchy.

Multi-document structure with 245 subdocuments.

Solution:

Created a structured input file representing the full content hierarchy.

Combined this with source content to improve accuracy to ~80%.

6. Designed for scale and adoption

Beyond the prototype, I defined a rollout strategy:

Standardized prompt library for common audit criteria.

Governance model for usage and quality control.

Feedback loop for continuous improvement.

Phased rollout to support adoption across teams.

Key Tradeoff

Rather than aiming for full automation, I designed the system as an AI-augmented workflow with human validation.

This meant accepting:

~80% accuracy instead of 100% automation.

Continued need for human review.

In exchange for:

Dramatic efficiency gains.

Higher scalability.

More consistent and repeatable analysis.

By prioritizing augmentation over automation, the system enhanced instead of replacing expert judgment.

The Impact

Reduced audit time from 6–11 days to ~2–3 hours (~90% reduction).

Successfully analyzed ~971 topics and ~395K words in a single workflow.

Achieved ~80% accuracy across all audit criteria with human validation.

Enabled analysis of larger content sets and more audit criteria than previously feasible.

Created a repeatable framework for scaling content audits across teams.

What This Work Demonstrates

Applying AI strategically to high-value content workflows.

Designing systems that scale analysis across large content sets.

Strong information architecture and structured content thinking.

Ability to translate experimentation into operational models.

Balancing innovation with practical constraints and governance.