Rebuilding a Fragmented Content Ecosystem into a Scalable Source of Truth

At Oracle, I led the transformation of a fragmented internal content ecosystem into a unified, scalable source of truth, improving usability for over 500 writers while reducing support overhead and duplication across teams.

3 second findability | 12% fewer support requests | 500+ writers supported

The Challenge

Internal guidance for authoring and publishing was spread across nine locations and three formats, with some content outdated by years.

As a result:

Writers struggled to find accurate information.

~60% of teams created and maintained their own guidance.

Content was inconsistent, duplicative, and difficult to trust.

The underlying issue wasn’t just fragmentation, it was the absence of a clear content ecosystem strategy and sustainable ownership model.

My Role

I owned the end-to-end strategy, including:

Information architecture redesign.

Content model standardization.

Governance and contribution model.

Cross-functional alignment with writers, support, and product teams.

My Approach

1. Grounded the strategy in user behavior

I started by understanding how writers actually worked:

Surveyed writers across teams.

Ran card sorting exercises to map workflows.

Partnered with support to identify recurring issues.

This revealed a critical gap: content was organized around systems, not user tasks.

User Research Summary

-

Executive Summary: A needs assessment survey of 18 UA Developers revealed widespread reliance on outdated, duplicated, and inconsistent internal guidance and a fragmented content ecosystem where over 60% of teams had built their own supplemental guidance to fill the gaps. The findings informed a prioritized content strategy to modernize the Center of Excellence (CoE) and reduce redundancy across the organization.

Objective: To identify which content UA Developers rely on most, measure how frequently they reference internal and team-specific guidance, and catalog existing team-level resources to inform a consolidated, organization-wide content strategy.

Methodology: 18 participants, survey ran June 17–28, 2024

Insight: Over 60% of teams were maintaining their own guidance to compensate for gaps yet only half of them referenced it regularly, suggesting that fragmentation, not lack of effort, was the core problem. The top complaint across respondents was outdated content, cited in nearly every open-ended response.

Action: Prioritized content updates based on what writers ranked as most important (Editing and Writing Standards, Interface Pages, and Graphics) while consolidating or retiring low-use guides (DocBuilder and Lockdown). Outreach was also initiated with the 11 teams maintaining separate guidance, to identify content worth absorbing into the central CoE.

Impact: High-priority content was updated and expanded for the initial site rollout. The content roadmap was restructured to align updates with tool releases and writer workflows, and a contributor model was established with 12 volunteers to help keep guidance current over time.

-

Executive Summary: A card sort session revealed how UA Developers associate internal guidance with their core workflows. These findings directly informed the navigation structure of the UA Knowledge Center web portal, ensuring the redesign reflected writer mental models rather than internal team assumptions.

Objective: To understand how UA Developers mentally categorize internal guidance, and to use those patterns to inform a navigation structure.

Methodology: A moderated card sorting session was conducted with UA Developers using a collaborative whiteboard tool, including open time for participant comments.

Insight: Authoring dominated as the most common subject area at 17%, with closely related topics (graphics 8%, accessibility 7%, interface pages 4%, links 3%, and content reuse 3%) pointing to authoring as a broad category. Equally revealing was what participants raised unprompted: new writers came in as the second highest subject at 12%, and multiple participants flagged that the existing CoE was written with a specific toolset in mind, leaving acquisition teams without a clear starting point.

Action: Navigation was restructured to reflect how writers actually think about content, grouping authoring and its related subjects into a cohesive cluster.

Impact: The card sort established a content architecture grounded in writer mental models rather than internal team assumptions or system-centric language. The findings also surfaced a gap: onboarding for new and acquired writers. A getting started video series was added to the roadmap as a priority with the first video releasing in the initial site rollout.

-

Executive Summary: Interviews with five Help Desk team members revealed that most support requests involved issues already covered in existing documentation. The team also noted that a knowledge gap around DITA fundamentals was present. The findings informed targeted content updates and structural improvements to the UA Knowledge Center.

Objective: To reduce repeated, simple Help Desk queries and identify knowledge gaps on UA teams so that guidance or training could be developed or highlighted to address them.

Methodology: Five moderated interviews were conducted with Help Desk team members over two days in July 2024. Participants were asked about common questions, recurring issues, root causes, and which tools and features UA Developers struggled with most.

Insight: Documentation existed but writers weren't reading it. New and acquired writers were arriving without training on fundamental concepts, and how the internal tools connect. Build failures were the most common issue, frequently caused by XML validation errors that could have been caught earlier. Interface pages, linking, and REST API documentation were flagged by multiple participants as consistently incomplete.

Action: Interface pages and a high-level on-boarding video were prioritized for immediate updates. A troubleshooting flow was developed to help writers self-diagnose common build failures. Conceptual content about the toolset and DITA was added to address foundational knowledge gaps.

Impact: High-priority content gaps were addressed in the initial site rollout. A workflow-based structure was adopted across content type guides, and extended on-boarding videos were added to the roadmap.

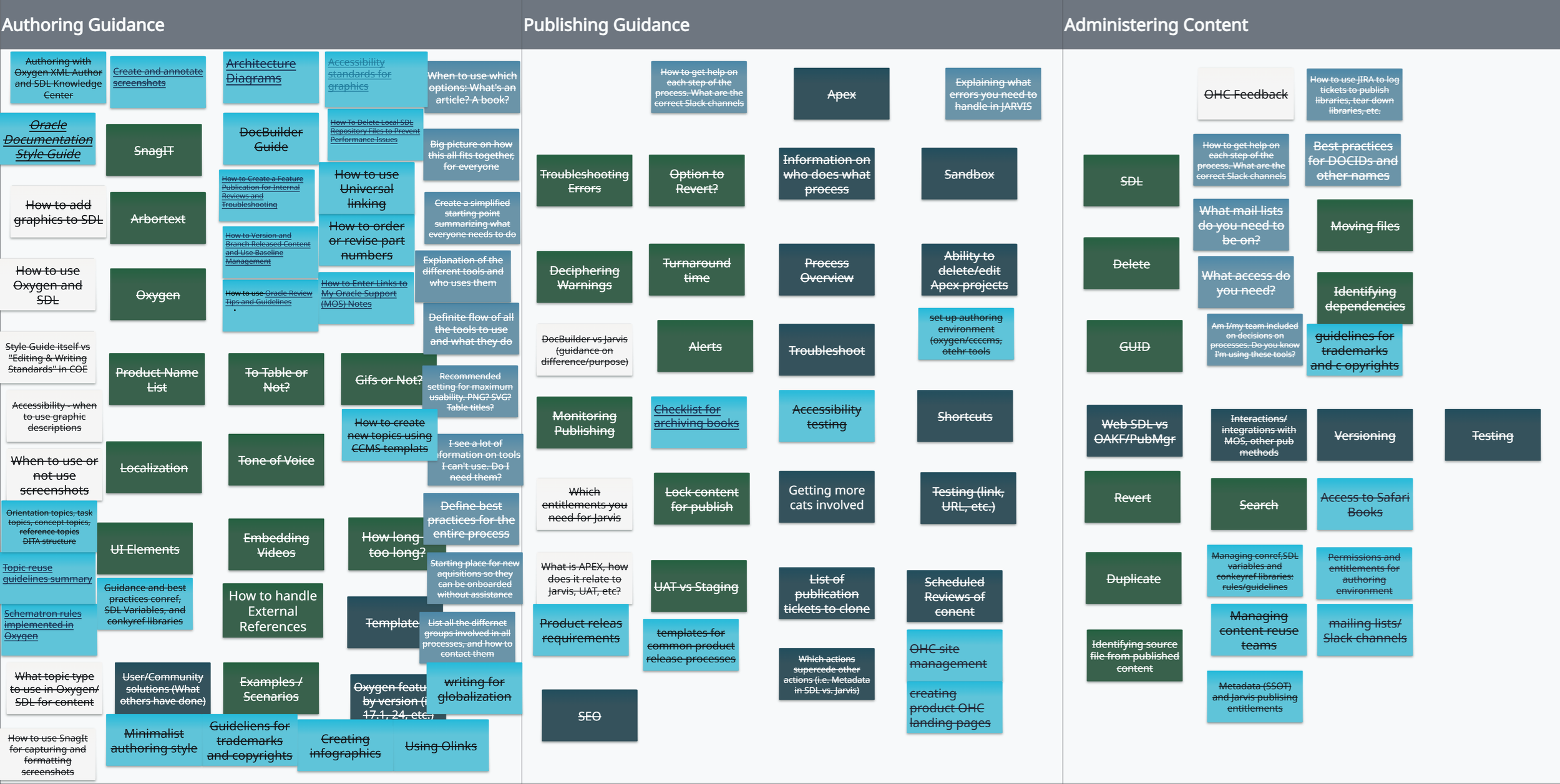

Card Sort Activity

2. Redesigned the information architecture

I restructured the ecosystem to align with real workflows:

Consolidated ~50 system-centric categories into 7 user-centric, workflow-based categories.

Standardized key content types for consistency and reuse.

Clarified ownership boundaries across teams.

This made content significantly easier to navigate and maintain.

Before: “Center of Excellence (CoE)” wiki

Accessibility

Architecture Center

Article publishing

Books

Content Pages

Editing and writing standards

Graphics

Instructional design

Javadocs

Learning paths

Linking

Lockdown

Markdown Authoring

MOOCs

OCI ONSR - Oracle National Security Regions

OHC Feedback

OHC Learn

Online help

Oracle Digital Assistant

Oracle Learning Library

Oracle Review

OU curriculum

Oxygen Authoring/SDL

REST API

Search

Self-publishing

Self-studies and simulations

Tutorials

Translations

After: User Assistance (UA) Knowledge Center web portal

3. Built a scalable operating model

To ensure long-term sustainability, I introduced:

A distributed contribution model with 12 cross-team contributors.

Clear standards and accountability for maintaining content.

Phased delivery to balance speed with quality.

4. Reduced reliance on tribal knowledge

I addressed gaps in on-boarding and documentation by strengthening both enablement and the central source of truth.

I created short on-boarding videos to help writers quickly understand how the system works and how to navigate it.

In parallel, I expanded the UA Knowledge Center to better represent content standards and best practices, ensuring guidance was clear, accessible, and up to date.

By improving both on-boarding and the depth of self-serve guidance, writers were able to apply standards correctly without relying on informal knowledge or support channels.

Key Tradeoff

Rather than removing outdated or redundant content upfront, I prioritized reorganization over removal.

This preserved coverage and allowed quality to improve iteratively while immediately improving findability.

The Impact

Reduced time to find answers from 5–10 minutes to ~3 seconds.

Decreased support requests by ~12%.

Reduced on-boarding time by ~20%.

Eliminated duplicate guidance across teams.

Established a scalable, community-driven model without additional headcount.

What This Work Demonstrates

Designing information architecture at scale.

Turning fragmented systems into cohesive ecosystems.

Building governance models that sustain quality over time.

Driving adoption through usability not enforcement.